Nucleon Atomic

Distributed Computing with Nucleon Power

The Nucleon Atomic software is a framework that allows for the distributed processing of large data sets across clusters of computers using map&reduce programming models. Nucleon Atomic supports software for file, relational and NoSQL database systems.It is designed to scale up from local single servers to houndres of machines, each offering local computation and storage.

The Nucleon Atomic software is a framework that allows for the distributed processing of large data sets across clusters of computers using map&reduce programming models. Nucleon Atomic supports software for file, relational and NoSQL database systems.It is designed to scale up from local single servers to houndres of machines, each offering local computation and storage.

Nucleon Atomic’s MapReduce and DCF components were inspired by Apache Hadoop and Apache Spark.The Nucleon Atomic framework itself is mostly written in the C# programming language. Though MapReduce .Net/C# code is common, any programming language can be used with DCF to implement the “map” and “reduce” parts of the user’s program.

Connect Datasources

Connect to any of your database deployments: if you have access to the database instance, you can add it as a data source in BI Studio and run Map&Reduce code to your data source.

Architecture

Nucleon Atomic DCF (Distributed Computing Framework) is a highly scalable storage platform designed to process very large data sets across hundreds to thousands of computing nodes that operate in parallel. The term MapReduce actually refers to two separate and distinct tasks that Nucleon DCF programs perform.

Map&Reduce is a programming paradigm which allows to map and reduce jobs as distributed and parallel. A MapReduce program is composed of a Map method that performs filtering and sorting and a Reduce method that performs a summary operation.

Nucleon DCF orchestrates the processing by marshaling the distributed servers (nodes), running the various tasks in parallel, managing all communications and

data transfers between the various parts of the system, and providing for redundancy and fault tolerance. Nucleon DCF uses Microsoft WCF technology for distributed computing and communication between nodes.

Nucleon DCF (Map&Reduce) is inspired and works like Apache Hadoop® System. It is a pure Microsoft .Net WCF based framework which only runs currently Microsoft Windows environments. Nucleon Map&Reduce can scale to hundreds of server cores for analyzing data or files using data streaming.

- Master Node

Main Node (Job Tracker and Maintainer) is the point of interaction between master and clients and the map/reduce framework. When a map/reduce job is submitted, Job manager puts it in a queue of pending jobs and executes them on a first-come/first-served basis and manages the assignment of map and reduce tasks to the task trackers.

- Worker Node

Worker Node (Task Tracker and Manager) execute jobs (tasks) upon instruction from the Master node (Job Tracker) and also handle data motion between the map and reduce phases. Nucleon Map&Reduce provides always at least one local worker node for code executing, data computing and processing.

Modules

The base Nucleon Atomic framework is composed of the following modules:

- Datasource Connections – allows to connect any data source

- Atomic DCF- for building distributed master/worker nodes using WCF Technology.

- Atomic MapReduce – an implementation of the MapReduce programming model for large-scale data processing.

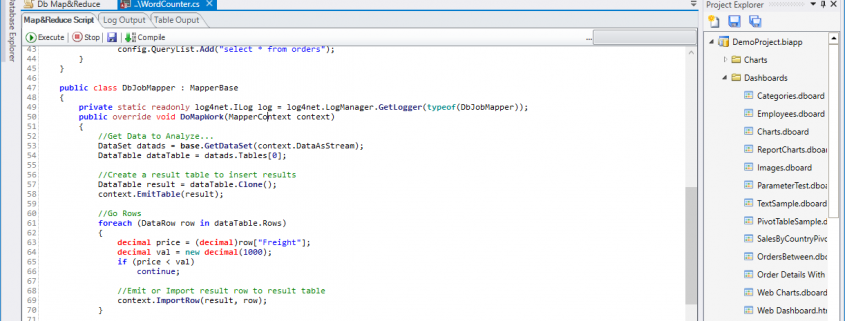

Database System

MapReduce application can execute Map&Reduce code against database systems, process the tables and views. The database table or view result will be distributed over the worker server nodes. The result of the map nodes will be filtered on the main node.

File System

MapReduce application can execute Map&Reduce code against file systems, process the files. The file content distributed over the server nodes and result will be filtered on the main node.

Main Features

- Job Distribution, Reduction and Finalization

- C# Code for Map&Reduce

- Query, Filter, Compute and Visualize Results

- Map&Reduce to File System

- Map&Reduce to Database Tables